It has become very easy for people to use computer programs to make fake videos that seem real. Many people are very worried about how these tools might be misused.

In the last few years, computer experts have developed methods for creating fake videos that seem incredibly realistic. Most of the computer tools used to create these fakes involve Artificial Intelligence (AI).

(Source: dfaker [MPL-2.0], via Github.)

Artificial Intelligence is sometimes called “machine learning” or “deep learning”. That’s because AI computer programs sort deeply through huge amounts of information, which allows them to find patterns humans haven’t noticed. The programs can then use those patterns in many surprising ways.

In recent years computer scientists have come up with several different ways of creating false videos of people using AI. These videos are usually called “deepfakes”.

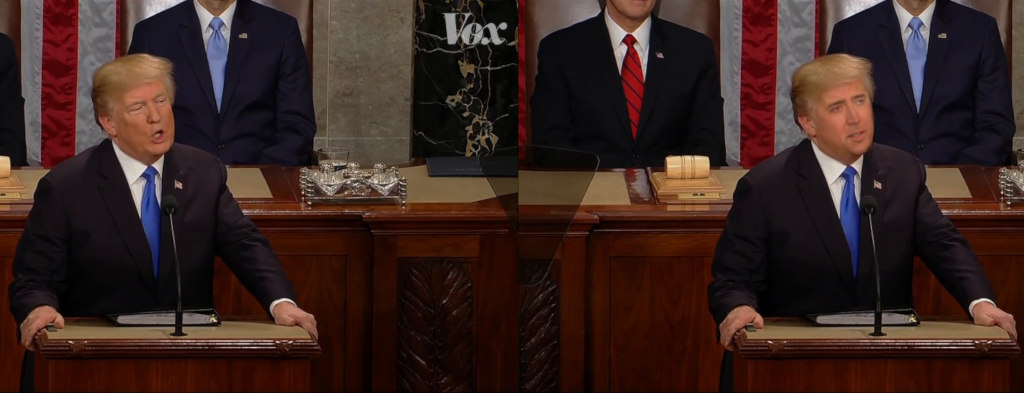

(Source: Jordan Peele, Screenshot via YouTube.)

Some deepfakes work by putting the face of one person onto a different person in a video. Others work by taking an existing video of a person and changing it so that the person says or does something they didn’t say or do.

Though some videos are clearly not quite right when you look closely, others are nearly impossible to spot as fakes.

At first, creating deepfakes was complicated. It required special knowledge, hundreds of pictures of the person who was being faked, and lots of time. Now it’s much simpler. There are websites and apps that allow almost anyone to create deepfakes.

(Source: FaceSwap, Screenshot via Github.)

In China, an app recently came out which allowed users to put their faces into famous movie scenes. The process takes about eight seconds, requires one picture, and can be done on a mobile phone.

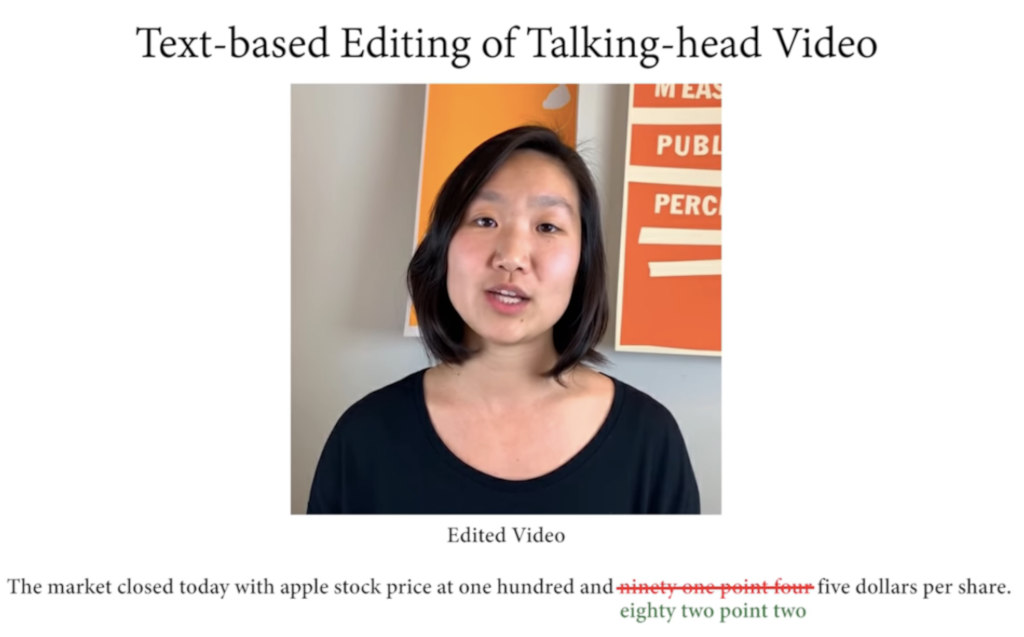

One group of computer scientists created a program that allows them to edit the words coming out of someone’s mouth in a video just like you might edit a document on the computer.

(Source: Ohad Fried, Screenshot via YouTube.)

Deepfakes raise serious worries. It’s one thing to swap the faces of famous actors. But what happens if someone puts out a fake video of a politician, for example, making it look like they broke the law?

There’s also the problem of the time it takes to figure out that something is fake. Even if a video is proven to be fake, it could be too late. Millions of people might have already seen and believed it.

These concerns aren’t just make believe. In May, a video that was changed to make Nancy Pelosi appear drunk was spread widely across the internet. Ms. Pelosi is the Democratic leader of the US House of Representatives. That video wasn’t even a deepfake.

(Source: Gage Skidmore, Peoria, AZ, via Wikimedia Commons.)

Some people worry about the opposite problem. What happens if a video is actually real, but people don’t trust it because they’re told it’s a deepfake?

Many people believe that, sooner or later, deepfakes will begin to appear during elections. Some fear such videos might become part of the 2020 US election for president.

Many deepfakes are so good only another AI system can tell that they’re fake. Experts are working hard to create new AI tools that can detect faked videos, but it will be hard to stay ahead of the deepfakes, which are improving rapidly.

Did You Know…?

In March, thieves stole around $243,000 with a deepfake phone call. The leader of a British company got a call from his boss telling him to transfer the money to a bank account in Hungary. But the “boss” was actually a deepfake voice. By the time the man figured out the trick, the money was already gone.